What is an Activity Stream?

Unlike more traditional data modeling approaches, an activity stream creates an environment in which all reporting is based on a single, skinny table. Each row of this table is an individual activity with a corresponding timestamp. This reflects a who-did-what-when in a company’s value chain and serves as the foundation for the organization’s metrics. This approach simplifies data down to exactly what your business cares about and enables users to build the reports they need without complex JOINs and intense SQL queries.

How does an Activity Stream work?

In an Activity Stream model, source data is immediately cleaned, reformatted and renamed to suit downstream needs. From here, the clean data flows together into intermediate stages where logic is applied to shape the data into larger entities. These entities are the foundation upon which we filter out and identify activities, which are the atomic measurement events at the heart of the business. All activities then flow directly into our Activity Stream, ready to supply all reporting needs.

This structure introduces trust and maintainability into our data model thanks to its modular nature, demonstrated by four major points:

- Data Identification: Data is sourced and cleaned one time. Manipulation is confined to a single location, making maintenance easy. We can confidently reuse staging data downstream knowing where it’s from and what it looks like.

- Logic Consolidation: For each entity being reported on and activity defined, logic is stored in one place. This makes troubleshooting much easier and prevents doubt around “which version” to use when reporting on specific metrics. Consolidation of logic also makes our model easy to scale and manage. Each activity is defined independently and can be added with ease. Entities are reused, which shortens the time to insight and prevents wasted work recreating existing data.

- Ease of Reporting: With access to just the Activity Stream and Context tables, reporting teams will have trusted data that is easy to build reporting from, oftentimes with just one or two simple joins to the appropriate context tables.

- Complex Analysis: The structure of the Activity Stream works across departments and entities, meaning that unrelated events can be analyzed together for deeper analysis on how various parts of your business work together. The modular structure means that power users have more freedom to work with clean data. Source data is conformed into entities using the language of the business, and each of our activities are placed on a common timeline. This allows for complex time series and multiple concept analyses by default.

Building in Matillion

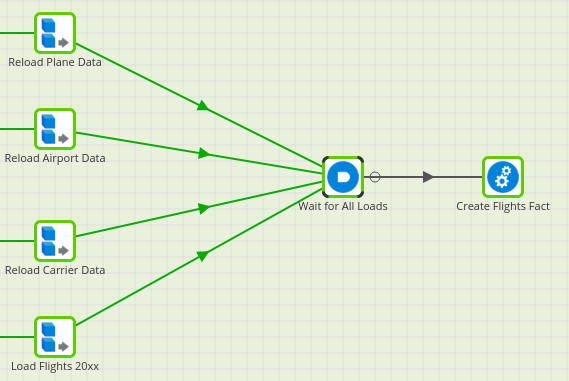

Modeling the Activity Stream in Matillion will involve some unique modifications compared to its implementation in other data modeling tools like dbt. The key here is ensuring that Matillion runs each job in our model at the appropriate time. Where other tools can automate this flow, Matillion offers visual tools and a library of components for us to work with. We can use a series of logical components to control the flow and run orchestrations to construct our layers. Each layer of the stream is constructed using run orchestrations and ‘AND’ components as shown below.

Shown above are three unique staging jobs flowing into a single ‘AND’ component before the intermediate job that uses them. The ‘AND’ component requires that all components connected to it are completed in order for flow to continue. This design ensures that Matillion will not begin the Intermediate job until each of the three stage jobs have been successfully completed. Implementing this design throughout the Activity Stream is an import safeguard against stale data finding its way into reporting tables.

Additionally, the ‘AND’ components can be a good place to introduce python components. These can be used to share diagnostic information out from the job to various places or initiate other processes on failure.

In addition to flow components, we can use Matillion’s folder structure to neatly organize the model and increase readability (this of course works best with a rigid job naming convention and disciplined data team). The Activity Stream can quickly grow to a roaring river with lots of data sources, so it’s best to be organized from the start.

Conclusion

With a firm Activity Stream in place, your business can utilize data in new ways and optimize the time spent on reporting. Reports can be built with greater confidence, faster speed and simpler SQL. In addition, the variety of activities built into the stream will present new opportunities for more complex analysis and cross-department reporting.

Contact us here or send an email to sales@dataclymer.com to learn more about the Activity Stream and how we at Data Clymer can help your business transform the way it handles data

Anthony is an Analytics Engineer committed to creating innovative, efficient and creative solutions for clients. With experience as a Business Intelligence Engineer, he specializes in SQL, ETL and Dashboarding tools.